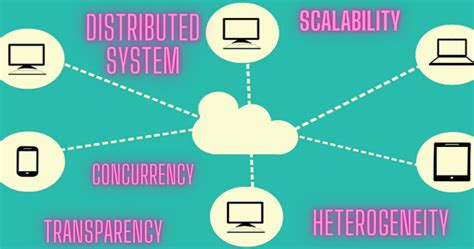

Understanding the recent trends in distributed systems is essential for anyone looking to stay ahead in the fast-evolving tech landscape. As we move further into 2025, we’re witnessing significant changes and advancements in how distributed systems operate, communicate, and manage data across multiple nodes. This guide aims to provide you with comprehensive, actionable advice to navigate these trends effectively. Whether you’re a developer, a system architect, or a tech enthusiast, this guide will help you understand and implement the latest distributed system trends.

Introduction to Distributed System Trends Fall 2025

Distributed systems have become the backbone of modern applications, allowing them to scale seamlessly and function reliably even when parts of the system fail. As we transition into 2025, the landscape of distributed systems is rapidly changing. Innovations in cloud computing, edge computing, microservices architecture, and new networking protocols are redefining how these systems operate. This guide will help you navigate these changes by offering step-by-step guidance, real-world examples, and practical solutions to common challenges.

Our goal is to address your pain points and provide actionable advice to implement these trends effectively in your projects. We will explore how to leverage the latest advancements to build more resilient, efficient, and scalable distributed systems.

Immediate Action: Adapting to the Changing Landscape

The first step in keeping your distributed systems up-to-date is to stay informed about the latest trends. Here’s what you need to do immediately:

- Subscribe to reputable technology journals and newsletters that focus on distributed systems.

- Join professional communities and forums where experts discuss the latest trends.

- Participate in webinars and online courses that cover new technologies and methodologies.

By taking these actions, you’ll stay ahead of the curve and be well-prepared to implement the new trends in your own systems.

Quick Reference: Key Takeaways

Quick Reference

- Immediate action item: Set up a routine for monitoring the latest distributed system trends.

- Essential tip: Use container orchestration tools like Kubernetes to manage microservices efficiently.

- Common mistake to avoid: Failing to consider the network latency when deploying edge computing solutions.

The Rise of Microservices Architecture

Microservices architecture is one of the most prominent trends in distributed systems today. It breaks down applications into small, loosely coupled services that can be developed, deployed, and scaled independently.

Implementing microservices offers several benefits, including improved scalability, flexibility, and resilience. However, it also introduces new challenges such as increased complexity in system management and communication overhead.

Why Microservices?

Microservices offer several advantages:

- Independent deployment: Each service can be deployed independently without affecting others.

- Technology diversity: Different services can use different technologies and programming languages.

- Scalability: Services can be scaled individually based on demand.

- Fault isolation: Failures in one service do not necessarily bring down the entire application.

Getting Started with Microservices

To start implementing microservices, follow these steps:

- Identify services: Break down your application into distinct, manageable services based on business capabilities.

- Choose a technology stack: Select appropriate technologies for each microservice, considering aspects like performance, cost, and developer expertise.

- Implement APIs: Develop APIs for each microservice to facilitate communication between them.

- Containerize: Use container technologies like Docker to package and deploy microservices.

- Manage orchestration: Employ orchestration tools like Kubernetes to manage and scale your microservices effectively.

For example, consider an e-commerce application. By breaking it down into microservices for user management, product catalog, order processing, and payment processing, you can deploy each component independently, allowing for more agile development and deployment.

Embracing Edge Computing

Edge computing involves processing data closer to the source of data generation rather than relying solely on centralized data centers. This approach reduces latency, saves bandwidth, and enhances the responsiveness of applications.

Benefits of Edge Computing

Edge computing offers several key benefits:

- Reduced latency: Data is processed closer to where it’s generated, reducing the time it takes to process and respond.

- Bandwidth optimization: By processing data at the edge, less data needs to be sent to central data centers.

- Improved real-time analytics: Edge computing enables real-time data processing and analytics.

- Enhanced privacy: Sensitive data can be processed and stored locally rather than being sent to cloud servers.

Implementing Edge Computing

To implement edge computing, follow these steps:

- Identify use cases: Determine which applications or processes benefit most from edge computing, such as IoT devices, smart cities, or autonomous vehicles.

- Deploy edge devices: Set up edge nodes (devices or servers) close to the data sources.

- Develop edge applications: Create applications that can run on edge devices to process data locally.

- Connect to central systems: Ensure that edge devices can communicate with central systems when necessary for data aggregation and storage.

- Manage security: Implement robust security measures to protect data processed at the edge.

For instance, in a smart city scenario, sensors spread across the city generate data that needs to be processed in real time. By deploying edge computing solutions, you can process sensor data on local edge nodes to make timely traffic management decisions, reducing the need to send all data to a central cloud server.

Practical FAQ: Answering Common Questions

How do I balance microservices and monolithic architecture?

Balancing microservices and monolithic architecture involves a strategic approach. If you’re migrating from a monolithic system, start by identifying the most critical parts of the application to break into microservices. Begin with smaller, self-contained services that can be independently deployed and scaled. Gradually refactor larger components as you gain experience and confidence in managing microservices. Ensure that inter-service communication is efficient and that your architecture remains flexible and maintainable.

What are the best practices for managing microservices?

Managing microservices effectively involves several best practices:

- Service autonomy: Ensure that each microservice is independent and can be deployed and updated without affecting others.

- API management: Use well-defined APIs for communication between services, and maintain API documentation.

- Service discovery: Implement service discovery mechanisms to allow services to find and communicate with each other dynamically.

- Centralized logging and monitoring: Use centralized logging and monitoring tools to track the health and performance of each service.

- Security: Implement security measures at the network, service, and data levels to protect microservices from threats.

Conclusion

The trends in distributed systems in fall 2025 are shaping the future of technology in profound ways. By staying informed and proactively adopting these trends, you can build more efficient, resilient, and scalable systems. Remember, the key to success lies in a balanced approach that combines the best practices and tools available. Stay curious, keep learning, and don’t hesitate to experiment with new technologies to discover what works best for your projects.