The age-old question of whether 0 truly counts as a number has sparked debates among mathematicians and scholars for centuries. From a foundational perspective, understanding this notion is crucial for grasping basic arithmetic, number theory, and even more advanced mathematical concepts.

As we dive into this fascinating topic, we’ll explore the nuances surrounding the classification of 0, its role in mathematical systems, and the implications it holds in various fields.

Key Insights

- The debate over whether 0 is a number or not highlights its unique place in mathematics.

- Technically, 0 serves as a foundational element in the set of natural numbers and the number line.

- In practical applications, recognizing 0 as a number is crucial for operations such as zero-based counting systems.

Understanding the nature of 0 requires a dive into the historical and philosophical aspects of mathematics. Historically, the concept of 0 has evolved significantly over time. In ancient civilizations like Babylonians and Mayans, 0 was recognized but not as a number in the traditional sense. It was more of a placeholder. It was the ancient Greeks who first began treating 0 as a number with a defined place value, which laid the groundwork for the number system we use today.

The Mathematical Perspective

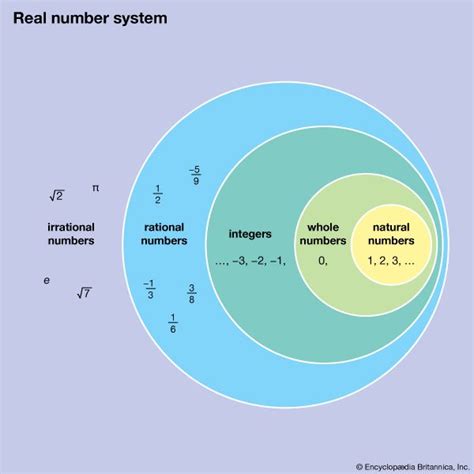

From a mathematical standpoint, 0 is integrally embedded within the number line. Without 0, the continuum of numbers loses its midpoint, disrupting the balance between positive and negative integers. In set theory, 0 is considered an element of the set of natural numbers (although opinions vary on whether this set should strictly include positive integers or also zero). It is through this lens that 0 becomes indispensable in defining and understanding arithmetic operations, including addition, subtraction, and particularly multiplication.Zero plays a pivotal role in the rules of arithmetic. For instance, any number multiplied by zero equals zero. This property, known as the multiplicative identity, highlights the necessity of 0 to maintain the consistency of arithmetic rules. Without zero, the mathematical framework would collapse under the weight of its inconsistencies.

Practical Applications

Recognizing 0 as a number has significant practical implications. In computing, for example, zero-based indexing is a common practice in programming languages such as Python and C#. This indexing starts counting from zero, allowing for a more intuitive and flexible framework when managing arrays and data structures.In finance and economics, zero is often used to represent the absence of a quantity or value. For instance, a bank account balance of zero indicates a lack of funds. This use of zero in everyday transactions underlines its essential role in practical applications.

Is 0 considered a natural number?

This is a matter of debate. Some definitions include 0 as a natural number, whereas others reserve the term for positive integers starting from 1. The context in which 0 is used often dictates its classification.

How does 0 influence mathematical operations?

0 influences mathematical operations in fundamental ways. It serves as the additive identity (e.g., any number plus zero remains unchanged) and acts as the multiplicative identity for zero (e.g., any number multiplied by zero results in zero).

In conclusion, whether or not 0 is classified as a number depends on the specific context and theoretical framework being applied. Its inclusion in various number sets and its critical role in arithmetic and practical applications underscore its importance in the mathematical landscape. By acknowledging 0’s unique role, we can better appreciate its contribution to the broader field of mathematics and beyond.